End-to-end • Internal Investigation Platform • AI-Assisted Workflows

Investigation at scale.

Then investigation at speed.

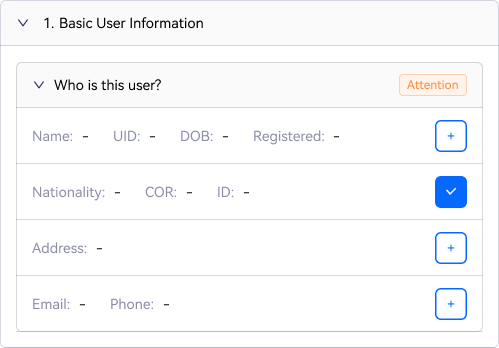

I led UX design for OKX's internal case management system across two versions.

CMS 1.0 tackled scale: investigators were managing complex multi-user cases with no tools to support them. CMS 2.0 tackled speed: even after the structure was right, analysts were still spending most of their time collecting data instead of making decisions.

The multi-user patterns from 1.0 carry forward into 2.0. These are two layers of the same platform, solving different problems at different depths.

30+

Related users per master case

Significant

Reduction in manual verification effort

4

Roles across the investigation chain

25

Typology categories supported in the AI investigation model

// The problem

The system was built for one analyst,

one user, one form.

The work had long outgrown it.

Financial crime investigations had evolved. Cases increasingly involved networks of 30+ related accounts, cross-jurisdiction patterns, and layered risk signals that no single analyst could hold in their head at once.

But the case management system was still designed for a single reviewer on a single user. Senior analysts carried a disproportionately large backlog relative to their case capacity and at original throughput, the queue was not clearing.

Scale was the first problem. Speed was the second. And the second only became visible after the first was solved.

// Who we designed for

Four roles. One investigation chain. Very different failure modes.

L1 investigator

Alert Reviewer

High-volume triage. 7,800+ cases per week. Initial risk assessment, account activity, onboarding. Escalates when patterns emerge. Single-user focus.

L2 investigator

Case Analyst

Complex network patterns: 30+ related accounts, high-velocity transfers, layering activity across jurisdictions. Files suspicious activity reports. Per-user decisions required. Before CMS 1.0: pivot tables, Google Sheets, manual know-your-customer (KYC) review, all outside the system.

Team lead

Quality Gate

Reviews analyst dismissals for new joiners and severe cases. Confirms or sends back. Needs full narrative context on arrival, no capacity to re-investigate or chase clarification.

Reporting Officer

Final Regulatory Authority

Final call on suspicious activity report filing and account offboarding. Accountable to regulators if a decision is challenged. Cannot afford a second pass. Every decision, rationale, and evidence trail must be ready in one view.

Research method

Focused investigation workshop with analysts and team leads from Singapore, Europe, and Malta financial intelligence unit teams. Case walkthroughs, evidence packaging mapping, pain-point prioritisation with team leads and reporting officers.

// CMS 1.0

The system supported multi-user data.

Not multi-user decision-making.

Cases involving networks of related accounts were increasingly common but the CMS was still built for a single reviewer. As a result, investigators had built their own parallel system out of Google Sheets, pivot tables, and manual copy-paste.

The CMS was where they submitted. The Sheets were where they worked.

Investigators lived outside the system

Evidence collection, KYC comparison, and pattern tracking all happened in spreadsheets, creating audit gaps, version conflicts, and duplicated effort across investigators.

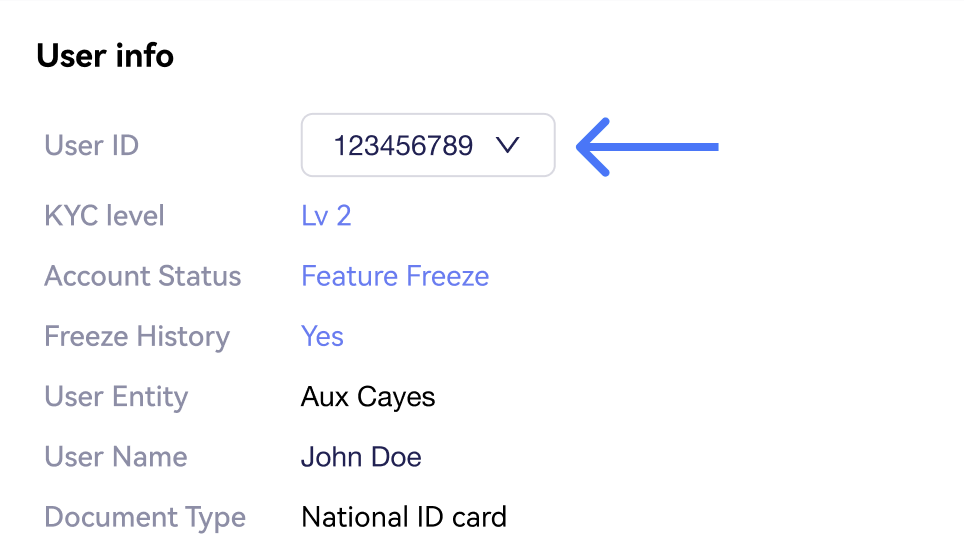

Navigation, not analysis

Multi-user cases existed only as a dropdown. No shared surface for comparison, risk clustering, or cross-user pattern detection.

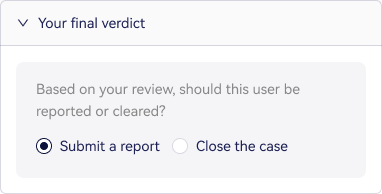

Case-level decisions, not user-level

Regulatory decisions (SAR filing, offboarding) are made per user but the system only supported a single case-level outcome. Accountability gaps at every TL and Reporting Officer review.

Evidence missing at the decision point

Investigators assessed identity consistency but KYC documents were not accessible during case evaluation. Manual tab-switching to a separate system, every time.

// Design solutions

Three deep dives.

Scoped with PM. Each solution directly addresses a workflow breakdown identified in research.

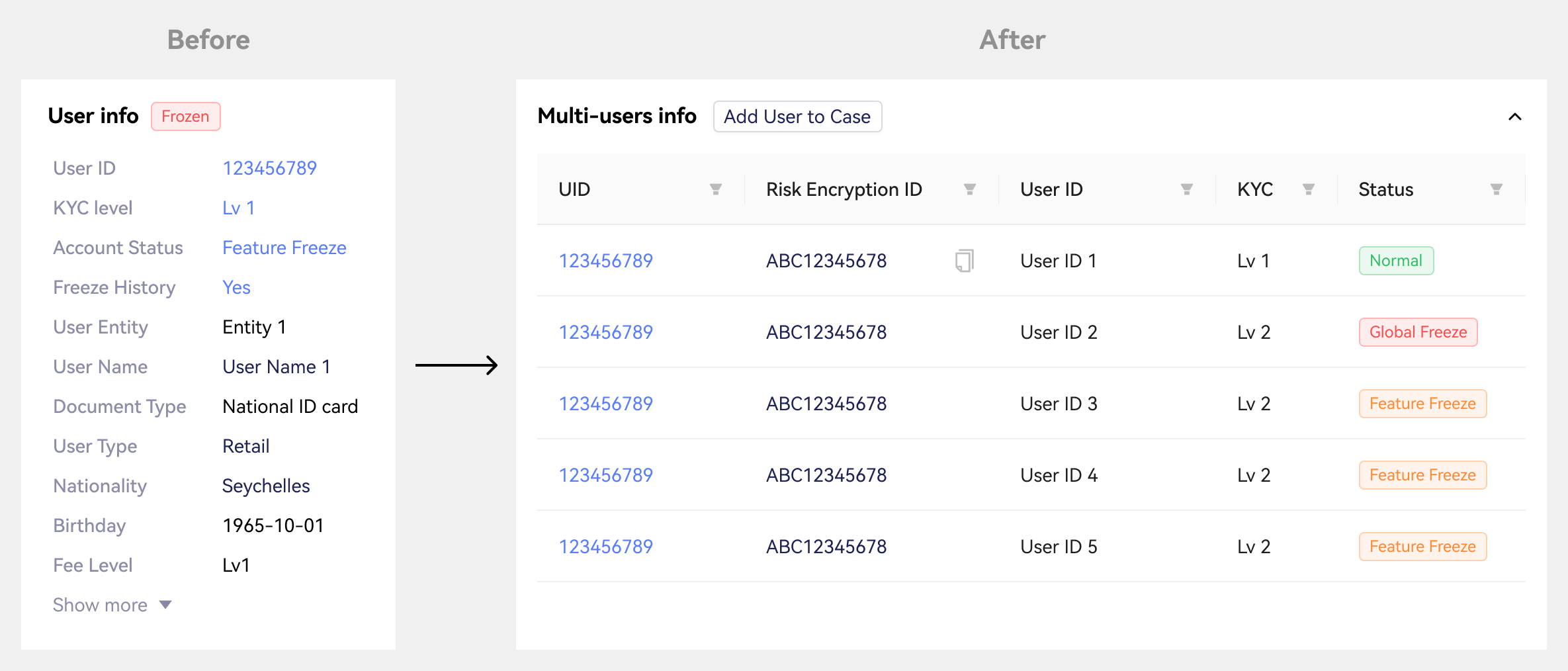

Deep dive 1 • Case Info Page

Multi-user comparision table

Users were navigation targets - you could switch between them via a dropdown, but there was no shared surface for comparison. We shifted to a table model that treats users as analyzable entities. Risk score, KYC status, freeze history, CRR score, and recommendation are now scannable across all users at once.

Before

Dropdown-based user switching

No comparison surface

Pattern detection impossible at scale

After

Comparison table with sortable columns

Risk, KYC, status all visible at once

Pagination for 30+ user cases

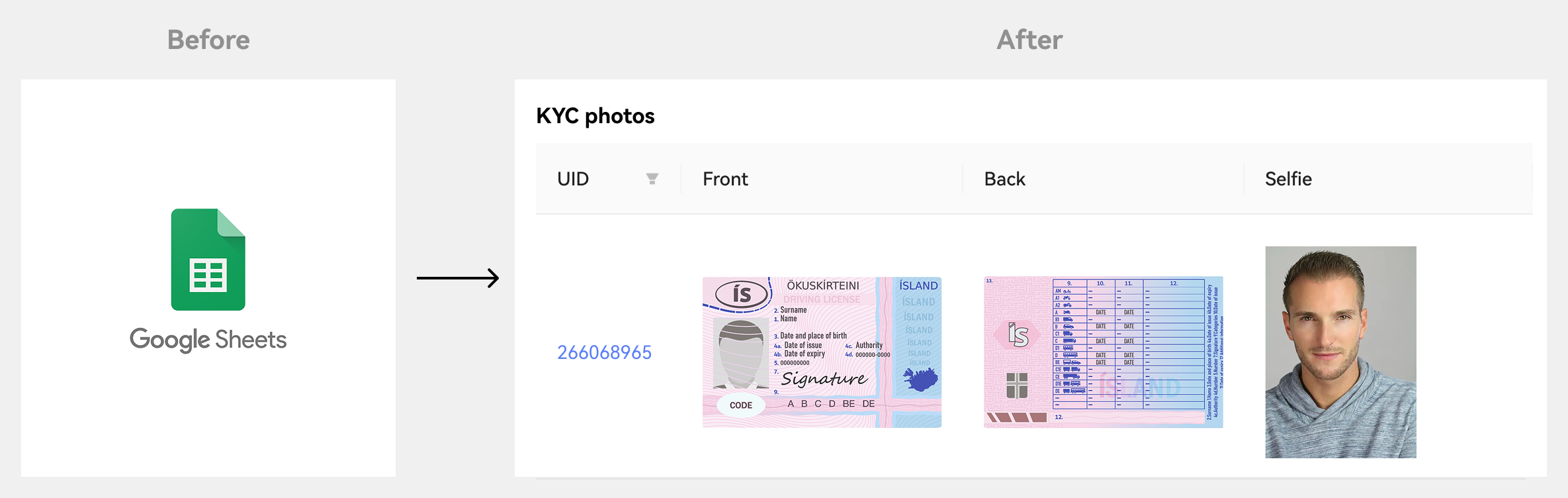

Deep dive 2 • Evidence in-flow

Bulk KYC Viewer & progressive disclosure

Investigators were asked to evaluate identity consistency - but KYC photos weren't surfaced during case evaluation. We added a collapsible KYC viewer showing all user photos in one grid, while using progressive disclosure to keep the primary investigation surface uncluttered.

Before

KYC accessed via separated system

Manual tab-switching per user

No cross-user visual comparsion

After

All KYC in one collapsible modal

Front / Back / Selfie per user

Risk detail in on-demand drawers

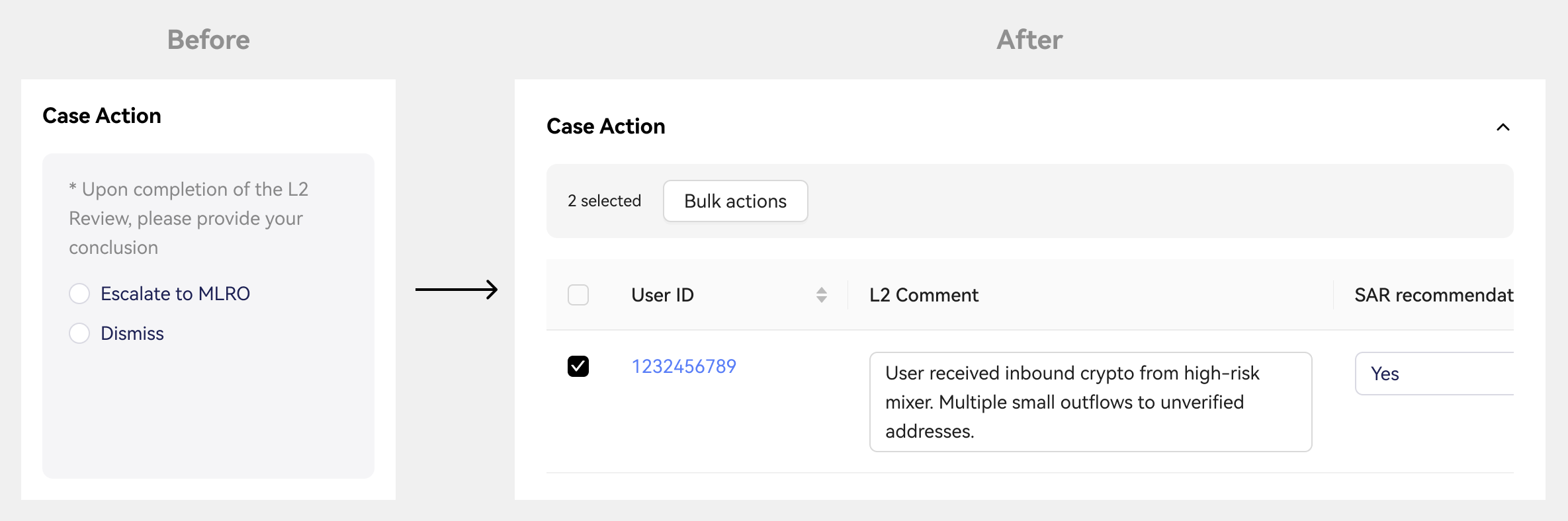

Deep dive 3 • Decision layer

Per-user case action with role-scoped decisioning

The review page had a single case-level conclusion, but SAR/STR filings and offboarding decisions are made per user. We redesigned the Case Action panel as a per-user decision table - with L2 comments, SAR recommendation, SAR decision, offboarding outcome, and MLRO summary. Bulk actions available with individual traceability. Editable fields scoped by role.

Before

Single case-level conclusion

Ambigous TL/MLRO accountability

No structured per-user rationale

After

Per-user SAR + offboarding decision

Role-gated edit access (L2/TL/MLRO)

Bulk apply with individual override

// Phase 1 checkpoint

We solved the complexity problem. The efficiency problem was next.

What CMS 1.0 resolved

Investigation happens inside the system, Google Sheets dependency eliminated

Per-user decisions with clear role ownership across the full review chain

Identity documents surfaced at the decision point

Evidence in the case record, traceable and auditable

Gaps that remained

Average handling time still high

Investigation quality varied between analysts — same case type, different outcomes

No guarantee every case received the same depth of scrutiny

AI assistance limited to output. <=Manual data collection throughout investigation unchanged

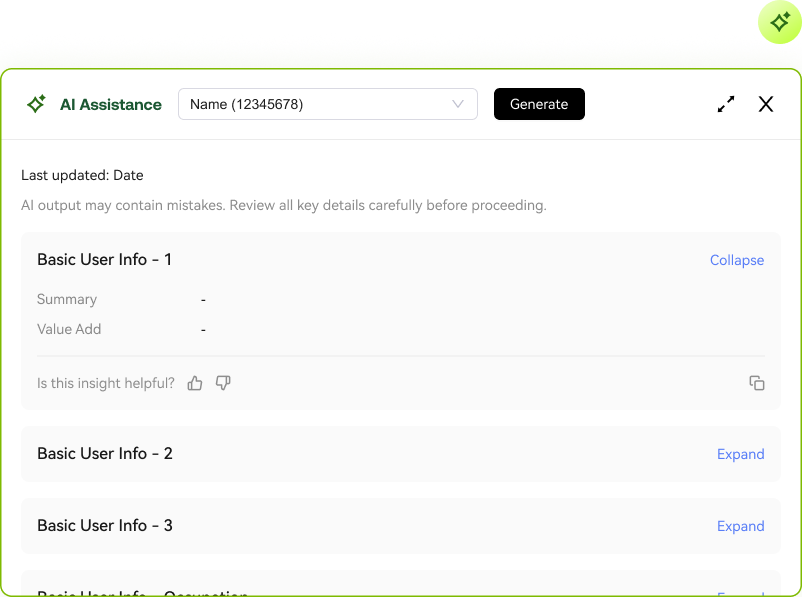

// The first AI attempt

We shipped AI assistance. The problem turned out to be deeper than the first version could reach.

A generative AI popup embedded in the case workflow

Surfaced AI-generated summaries per investigation section. But analysts still had to collect all the underlying data manually first. The AI summarised work that had already been done, it did not reduce it.

Gap: We added AI at the output. The bottleneck was at the input.

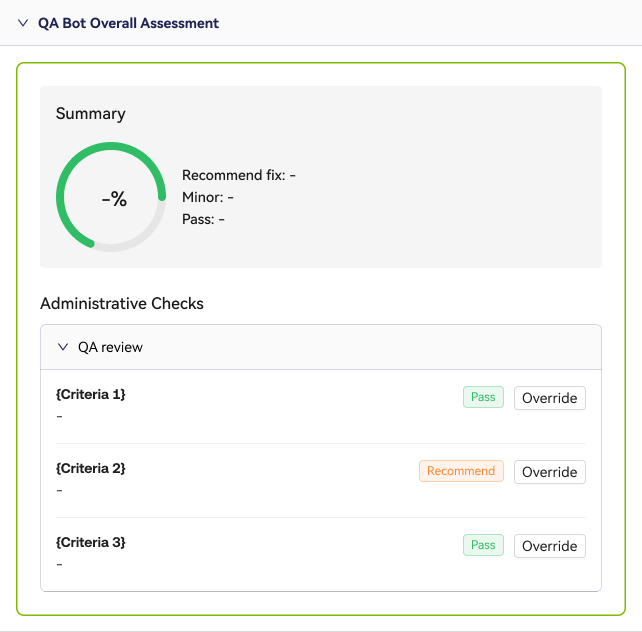

An QA AI Agent: automated quality review on every submitted case

Scored each investigation across compliance checks after submission. It caught gaps — but after investigation time was already spent. And when senior reviewers disagreed with a finding, there was no structured way to record it.

Gap: Post-submission review does not improve the investigation. Unstructured override leaves no trail.

// CMS 2.0

A new investigation experience.

AI-first, analyst-owned.

CMS 2.0 is a ground-up redesign of how investigation works. The system pre-analyses each case across all five investigation sections before the analyst opens it. The analyst arrives to structured findings not a blank form.

The multi-user patterns from CMS 1.0 carry forward. CMS 2.0 builds on top, going deeper into the investigation itself.

Revamp 1

AI pre-analysis

Before the analyst opens a case, the system has already worked through it. Facts extracted, signals flagged, attention points surfaced. The analyst arrives to a structured view, not a blank form.

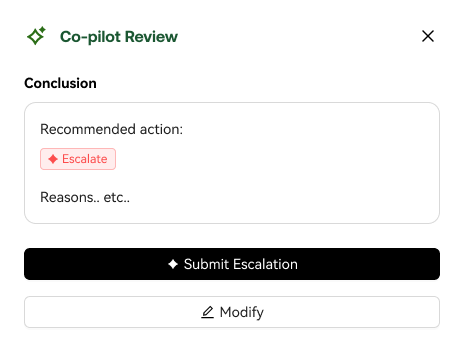

Revamp 02

Three-step investigation flow

The AI recommends an action. The analyst reviews it. If they agree, they submit. If not, they modify. The flow makes the analyst's relationship with AI output explicit, review first, decide second. The system suggests. The analyst calls it.

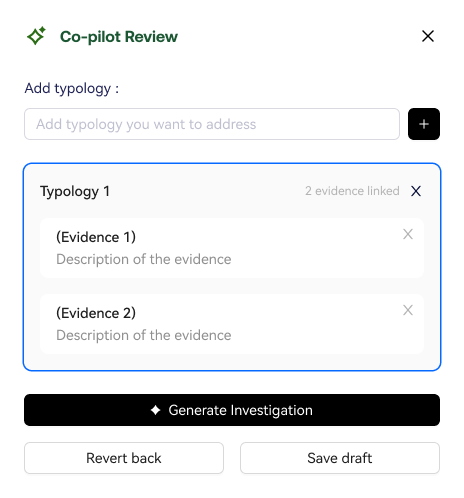

Revamp 03

Typology and evidence selection

The analyst selects the risk typology and links specific facts as evidence. This shapes what the AI generates, the analyst is directing the investigation, not just reviewing it. The AI drafts. The analyst owns.

Outcomes

〰️

Outcomes 〰️

CMS 1.0 multi-user case

Investigation fully inside the system, external spreadsheet dependency eliminated

Clear role ownership across the full review chain

Significant reduction in manual verification effort and report preparation time

CMS 2.0 AI-native

Targeting meaningful reduction in average case handling time

Quality and AI engagement instrumented and tracked from launch

// Closing

Financial crime investigation is not a data problem.

It is a decision quality problem.

We built for scale first, so investigators could handle the complexity of connected cases together.

Then for speed, so the system does the groundwork, and analysts focus on the judgement.

The hardest challenge across both was not building the features. It was making sure every decision stayed traceable to the person who made it.